Are we having the wrong nightmares about AI?

There are lots of potential nightmares when it comes to artificial intelligence. In a recent Torment Nexus I wrote about how the U.S. government seems hell-bent on using AI to engage in mass surveillance of American citizens (and probably lots of other people as well) and also to pilot autonomous murder drones. Obviously both of these things would be bad, especially given the fact that AI models love to hallucinate or "confabulate," as AI pioneer Geoffrey Hinton likes to call it. And then there's the science-fiction style nightmares — like the invention of Skynet, the all-powerful AI from the Terminator movies, or the killer robots from the movie I, Robot (which were controlled by a human being, to be fair). Or the somewhat bizarre nightmares of the effective altruism crowd, such as the paperclip problem or the Roko's Basilisk thought experiment, which posits that if there is an all-powerful AI, it might be mad that we didn't bring it into being sooner, and punish anyone who wasn't spending every minute of their day doing so.

There's also just the general nightmare around what happens if an AI becomes conscious. As I wrote in another recent Torment Nexus, a large part of the discussion is concentrated on whether an AI could become conscious in the way we understand that term, and there is also some debate over whether maybe some of the current ones have already achieved that goal. This is connected to the debate over whether we are close to achieving AIs with what some call "artificial general intelligence" or AGI — which is usually understood to mean intelligence that can do most of the tasks that an average human being can, across a wide range of skills, as opposed to AIs that are good at math or code. My favourite part of this discussion is that it reinforces the fact that we — even experts in the fields of psychology and sociology — aren't a hundred percent sure what consciousness consists of, and how to prove that human beings have it, let alone whether AIs do. And if an AI does become conscious in a way that we recognize? How should we respond to it, if at all? What does it require of us? I've written about that as well.

Then there are more prosaic nightmares about the potential disruption caused by AI, such as the impact on the job market and the economy. We've already seen a hint of that impact, whether it's on journalists or programmers or marketing copywriters — companies like Block and Atlassian have decided they don't need as many employees (although some believe that these layoffs are for other reasons, such as overspending on hiring during times of low interest rates, and that AI is just an excuse). For the near future at least, economists expect that there will always be a need for humans in the loop, as the saying goes, if only to double-check for hallucinations or check the AI's work in some other way. But in the longer term, it seems at least possible that a large category of jobs could either go away entirely or become significantly reduced, to the point where the human in the loop is just a babysitter or someone who checks a box. So that's a separate kind of nightmare from the "giant robots exterminate mankind" variety.

In a talk at the NeurIPS conference in San Diego last year, the largest and most prestigious conference on artificial intelligence in the U.S., Zeynep Tufekci — a professor of sociology and public affairs at Princeton and a columnist for The New York Times — gave a presentation in which she argued that we are focusing on the wrong kind of AI nightmares. I haven't been able to find a video version of her discussion (there is one on the NeurIPS website but it is available only to attendees of the conference), but luckily Jessica Hullman attended the conference and wrote about Tufekci's presentation on her Substack blog, which is called Epistemic Jetsam (epistemic meaning relating to knowledge and jetsam meaning unwanted items thrown overboard by a ship's passengers or crew). Hullman is a professor of computer science and a fellow at the Institute for Policy Research at Northwestern University. As Hullman describes it: "I was writing a blog post where I was going to reference Zeynep Tufekci’s 2025 NeurIPS keynote, and realized there isn’t a solid synopsis online. So here’s mine." An excerpt:

Tufekci’s through-line is that the dominant AI fears (and hopes) are misaligned: they fixate on “AGI,” superintelligence, or head-to-head “can it beat humans at X?” benchmarks. Her argument is that the destabilizing risk doesn’t require AI to be better than humans in any global sense. It’s enough for AI to be good enough, cheap, fast, and deployable at scale, which she calls in the talk and elsewhere “Artificial Good-Enough Intelligence.”

Note: In case you are a first-time reader, or you forgot that you signed up for this newsletter, this is The Torment Nexus. Thanks for reading! You can find out more about me and this newsletter in this post. This newsletter survives solely on your contributions, so please sign up for a paying subscription or visit my Patreon, which you can find here. I also publish a daily email newsletter of odd or interesting links called When The Going Gets Weird, which is here.

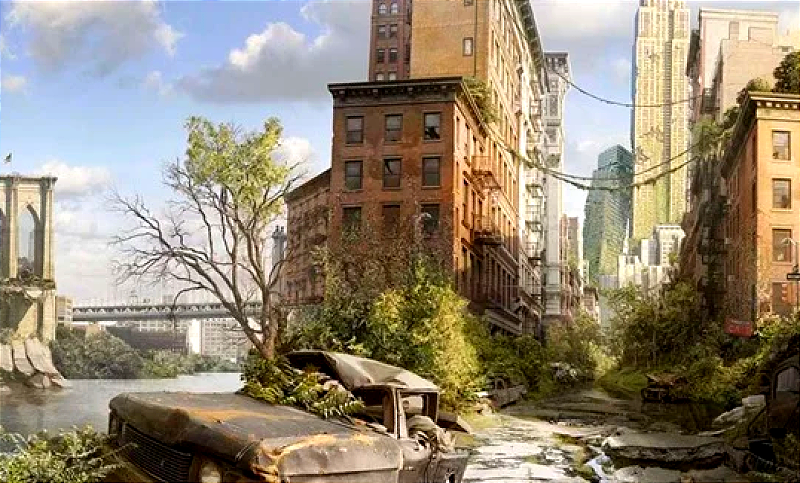

Second-order effects

Tufekci argued that the risks of artificial good-enough intelligence are not that it destroys humanity overnight or builds a fleet of killer robots, but that it slowly but surely reshapes society by breaking societal conventions around effort, sincerity, authenticity, and credibility. And once these signals erode, Hullman writes, "we don’t automatically get something better. We scramble to replace the old filters with new ones. And the transition from an old to a new way of doing things can be very costly, even if the old way of doing things wasn’t great." In a nutshell, Tufekci says, the early conversations that people tend to have about the impacts of a disruptive new technology are almost always incorrect, or are at least focused on the wrong things. In other words, they focus on familiar benchmarks and incremental changes when looking at the potential echnological impact, and ignore the kind of systemic second-order effects that matter.

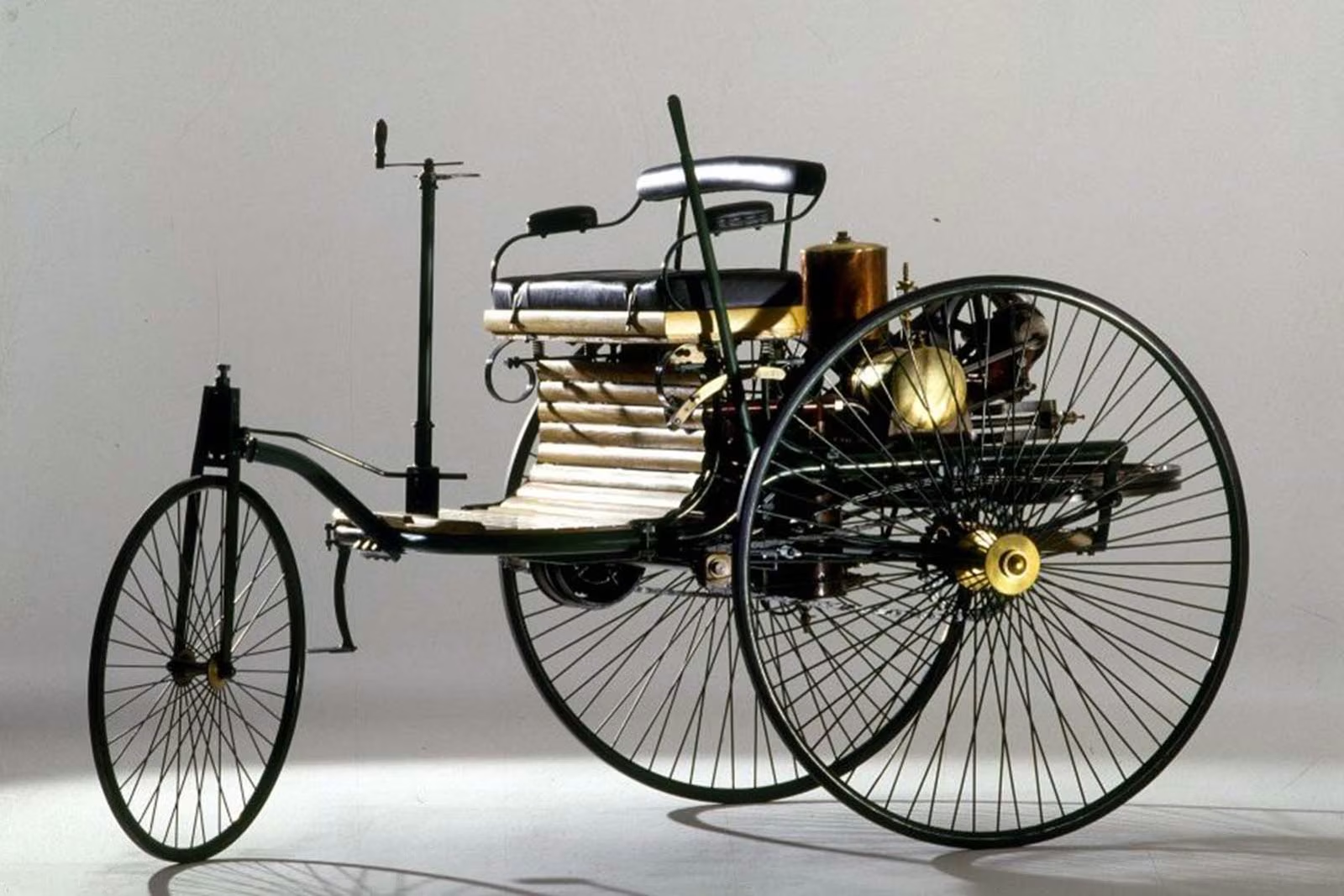

According to Hullman's synopsis, Tufekci looked at a couple of the most advanced and disruptive technological changes that we are familiar with: namely, Gutenberg's invention of the modern printing press in the 1400s, and the invention of the internal-combustion engine automobile in the 1800s. In both cases, smart people who were perhaps a little too close to the situation came to the wrong conclusion about what form the disruption was going to take. Many academics expected that the printing press would help entrench the power of the church, because it would allow religious leaders to print more bibles and other holy texts faster, and that would spread the word to more potential converts — not to mention printing indulgences, which gave those who paid for them a kind of medieval "get out of purgatory free" card. Instead, dissidents like Martin Luther used it to print criticism of the church and launched the Reformation.

As for the automobile, most people are probably aware of what Henry Ford allegedly said when asked whether he thought about what people wanted when he built his cars: "If I had asked people what they wanted," he allegedly said, "they would probably have said a faster horse" (Steve Jobs had a similar response, saying most consumers don't even know what they want until you give it to them, and that was certainly the case with the iPhone). Tufekci told the conference that when cars were first introduced, many people were preoccupied with whether horseless carriages would be faster than those with horses, and when asked about the potential effects, they focused on how cities would have less horse manure. But the reality is that the shift to cars changed cities and society in a hundred different ways, from the redesign of streets and logistics, to the way that the automobile opened up the country and allowed people to commute from place to place.

One of my favourite examples of the disconnect between the way people think about a new technology and the way it is ultimately used is the telephone. When it was first demonstrated by Alexander Graham Bell, it was widely ridiculed as a useless toy or a "scientific curiosity" — even Bell's assistant thought that phones might best be used in factories to make announcements to the employees (Bell himself had business uses in mind, but he also thought that people would answer the phone by saying "Ahoy!" — an idea that was quickly overtaken by Thomas Edison's suggestion of saying "hello"). Some early predictions were that phones would be used primarily as entertainment devices in the home, with people picking up the receiver to get news updates and so on, like the Parisian "Theatrophone" service, or the US Electrophone, which broadcast music and information to people's homes and to speakers located in public places.

The comments about Bell's new invention being a scientific curiosity or a useless toy, of course, reminded me of something the entrepeneur Chris Dixon — now a partner at the Silicon Valley venture capital outfit Andreessen Hororwitz — wrote in 2010 about how "the next big thing always starts out looking like a toy." As I mentioned in a recent edition of Torment Nexus, this was a paraphrase of the disruption theory of Clay Christensen, a professor at Harvard Business School. Christensen argued that disruption almost always starts at the low end of a market, with simple products or applications that can be easily and cheaply replicated, which provides enough revenue for the disruptor to move upwards and eventually take on the market leaders. The fact that Amazon, a trillion-dollar corporation, started by selling books is a good example, or the way that most Asian automakers started with cheap, low-end cars.

The good enough AI

One thing that really hit home for me about Zeynep's presentation was when Hullman described how she connected the expectations vs reality theme to some of the early optimism about social media and democracy, which "underestimated how quickly incentives and tactics would evolve." I remember being excited about Twitter and other tools, including Facebook, and how they were used during revolutions and uprisings like the Arab Spring in Egypt and elsewhere — something Tufekci wrote about eloquently at the time — and thinking that these kinds of tools could really change things for the better. Unfortunately, uprisings like the Arab Spring were quickly quashed, the army and dictators returned to power, and social media soon attracted all kinds of terrible people, who became just as adept at using the same tools, if not more so.

Another of Tufekci's points, Hullman said, was that the artificial general intelligence dialogue sets up the expectation that "there is a line representing humanness and AI is slowly approaching that line. In reality it’s always going to be a different thing, and the relationship much more complicated." In effect, we have a foreign kind of intelligence that is reproducing things we consider human, but doing so in a completely un-human way. Tufekci also made a point that I think is crucial to our understanding of what is happening with AI and how we are running out of time to deal with it: namely, that social disruption "doesn’t wait for a model that is uniformly excellent; it arrives when a system is useful enough to substitute into institutional workflows."

As a society we have come to rely on many built-in mechanisms that assume only humans can generate outputs with certain properties. LLMs break our ability to conclude when there is proof of effort. We make high schoolers write essays and do math assignments not because we care about essay or because we don’t know the problem set answers, but because the effort trains them in a certain way. We read customized cover letters as an important signal of interest, because it has traditionally been hard to make a good one, and you could only do it for so many jobs you applied for. Gatekeeping is inevitable, and when the old mechanisms stop working, other measures will step in, like relying on the prestige of the candidate’s institution or their connections.

Ultimately, Hullman writes, the message of Tufekci's presentation is that "transitions are expensive: breaking a flawed mechanism doesn’t automatically produce a better mechanism. Good enough AI at scale can destabilize the scaffolding of effort and authenticity long before anything like AGI arrives — if it ever does." The hard part about the kinds of nightmares that Tufekci is talking about is that they aren't as obvious as killer robots or a giant computerized brain taking over the world. They are almost hidden in a way, underneath and behind the way things work, and artificial "good enough" intelligence can change those routines and expectations just a little bit, and then over time more and more, until we can't even articulate how things have changed, but we know that they have. As with the printing press and the automobile, the world will just be irrevocably altered in ways that we won't appreciate for decades or possibly centuries.

The Atlantic's Alex Reisner wrote about Tufekci's talk and how a man in the audience said he and others knew of these kinds of concerns arouund trust and truth. But Tufekci said she mostly heard people in the AI field talking about mass unemployment vs human extinction. "It struck me that both might be correct," The Atlantic writer said. "Many AI developers are thinking about the technology’s most tangible problems while public conversations about AI — including those among the most prominent developers themselves — are dominated by imagined ones." Even the conference’s name contained a contradiction, Reisner noted: The name NeurIPS is short for Neural Information Processing Systems, but artificial neural networks were conceived in the 1940s "by a logician-and-neurophysiologist duo who underestimated the complexity of biological neurons and overstated their similarity to a computer. In the AI discourse, science fiction often defeats science." Because let's face it — science fiction is more interesting!

Got any thoughts or comments? Feel free to either leave them here, or post them on Substack or on my website, or you can also reach me on Twitter, Threads, BlueSky or Mastodon. And thanks for being a reader.