Social media may be bad for you but the remedy could be worse

Meta has been found guilty in two separate but related cases involving the alleged harms of its social platforms in the past couple of weeks. In one, a jury found that Facebook and Instagram harmed a young user with features that were addictive and either caused or exacerbated her mental distress. Meta had to pay the relatively paltry sum of $4.2 million (YouTube, which was also part of the suit, had to pay $1.8 million), but the dollar value — a sum that Meta likely makes every 10 minutes on the average day — isn't the important part. In many ways, the decision was a landmark ruling, and when combined with the second case against Meta it could either trigger or help fuel a firestorm of related lawsuits. In the second case, a jury found that the company failed to protect its younger users from child predators, and Meta was told to pay $375 million.

Governments have been trying for at least a couple of decades to go after social platforms, arguing that they cause significant harm to users, especially younger ones. The problem is that for the last 30 years, digital platforms have been protected by something called Section 230, a clause in the Communications Decency Act of 1997, which in turn is part of the Telecommunications Act. Section 230 has been called "the 26 words that created the internet" (which is good or bad, depending on whether you like the internet or not). Without going into detail, the clause says that digital platforms like Meta and TikTok aren't responsible for the content that their users post (it also protects people who write blogs and newsletters, but that often gets overlooked). Critics have called it a get-out-of-responsibility-free card, but haven't managed to kill it.

So how have the courts in the two recent cases against Meta gotten past this restriction? By using an argument that gained currency in the 1990s, during lawsuits against big tobacco companies and asbestos makers. In effect, the lawyers in the cases against Meta didn't argue that the content on Facebook and Instagram harms people, because that is protected by Section 230 — instead, they argued that the actual structure of those social products is by itself inherently addictive and therefore damaging. In other words, the argument isn't that Facebook and Instagram are harmful accidentally, or because the company isn't paying attention, but because they are harmful by design. As the New York Times explained in an analysis of the two recent cases:

The lawsuits claim that social media features like infinite scrolling, algorithmic recommendations, notifications and videos that play automatically lead to compulsive use. The plaintiffs contend that the resulting addiction has led to problems like depression, anxiety, eating disorders and self-harm, including suicide. The cases have drawn comparisons to those against Big Tobacco in the 1990s, when companies like Philip Morris and R.J. Reynolds were accused of hiding information about the harms of cigarettes.

If you dislike social media, these rulings might be cause for celebration, and many people responded (ironically, on social media) in that way. After all, shouldn't these companies have to answer for what they've created? They are worth trillions of dollars, they routinely surveil their users for advertising purposes, and collect all kinds of information and use it in other ways. Instagram has been implicated in cases of mental distress and depression involving young women (although as I've argued, the evidence for this is slim at best) and Meta's leadership has made it clear in internal memos introduced in court that it doesn't really care. That said, however, I think the way that these cases are going about it is fatally flawed, and that we could all wind up worse off as a result.

Note: In case you are a first-time reader, or you forgot that you signed up for this newsletter, this is The Torment Nexus. Thanks for reading! You can find out more about me and this newsletter in this post. This newsletter survives solely on your contributions, so please sign up for a paying subscription or visit my Patreon, which you can find here. I also publish a daily email newsletter of odd or interesting links called When The Going Gets Weird, which is here.

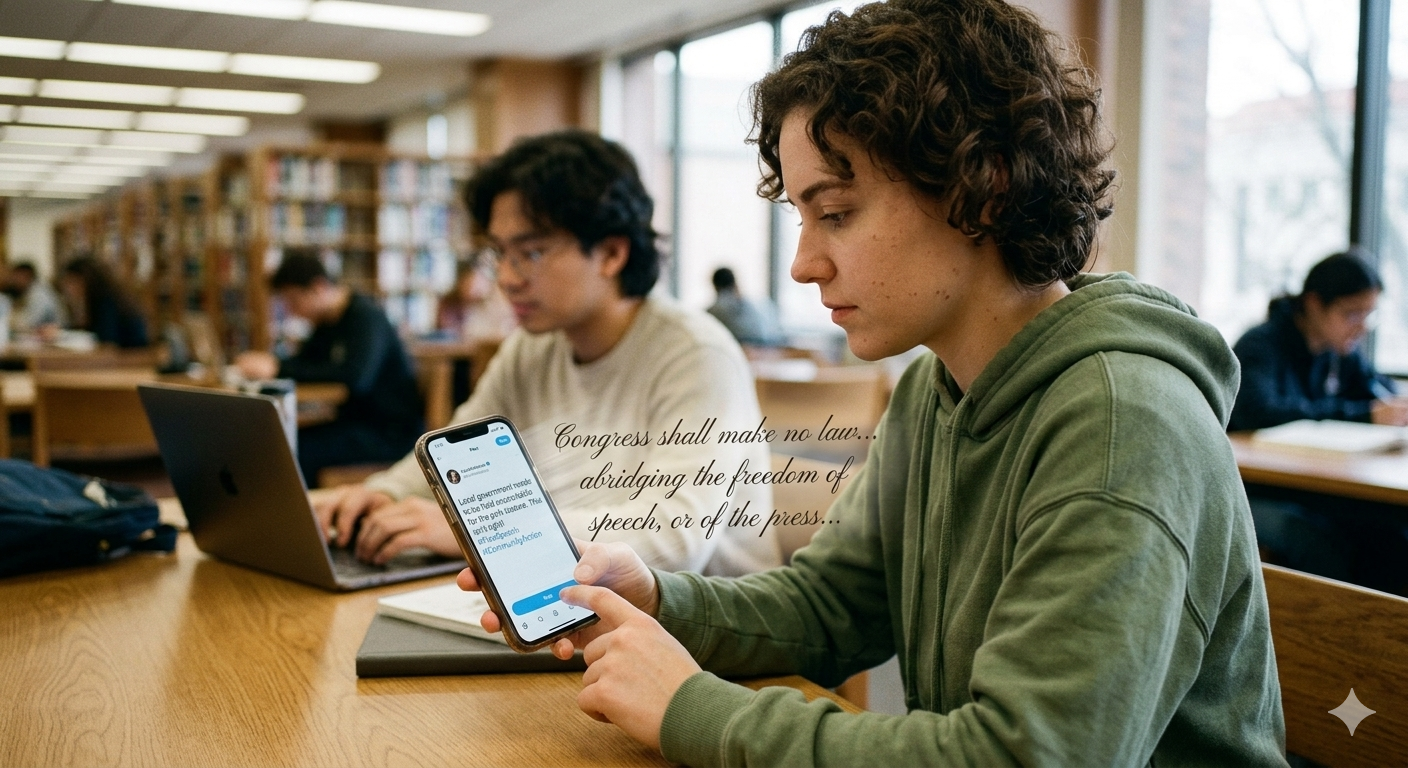

An end-run around the First Amendment

To me — and a number of others — there are a couple of significant flaws with these two cases, and the thousands more that are likely to follow them now that they have proven successful (although they will of course be appealed). The most obvious one is that the argument about Facebook or Instagram or YouTube being harmful by design is a transparent attempt to get around both Section 230 and the First Amendment's protection of freedom of speech, which these rulings threaten in a variety of ways. As Elizabeth Nolan Brown wrote at Reason magazine, it's obvious that a big part of what the lawyers in these cases were arguing was harmful was the actual content that users were allegedly harmed by and/or addicted to, not the mechanical function of an algorithm or the structure of the social network's website or apps or other features.

What is it that supposedly causes "addiction" if not the content that teens encounter on these platforms? What is it that allowed New Mexico authorities to pose as a 13-year-old girl and receive private messages if not a neutral communications platform? What are the features that New Mexico complains about — like livestreaming and ephemeral content and filters — but tools for user expression? And what else are social media platforms supposed to do if suits like these keep succeeding but make sure that the ideas their users put out in the world couldn't possibly be interpreted or used to some harmful end? As with regulating the ideas in books, the end result would be to limit everyone's access to ideas and communications online.

As my friend Mike Masnick put it in a post at Techdirt, imagine that you open Instagram and everything looks the same, but every single post is a video of paint drying. So there is the same infinite scroll, and the same autoplaying of videos, and the same kinds of algorithmic recommendations and intrusive notifications, following and liking features, etc. "Is anyone addicted or harmed? Is anyone suing?" Masnick asks. "Of course not. Because infinite scroll is not inherently harmful. Autoplay is not inherently harmful. Algorithmic recommendations are not inherently harmful. These features only matter because of the content they deliver. The addictive design does nothing without the underlying user-generated content that makes people want to keep scrolling."

Brown notes that historically, liability for products that are harmful by design has been applied to things that have physical dangers the manufacturer or seller has hidden from consumers. "Product liability is generally imposed on tangible 'products' (think brakes, tires, dishwashers, etc.) with inherent and unreasonable dangers that are hidden to consumers," Tyler Tone of the Foundation for Individual Rights and Expression (FIRE) wrote in a recent post about AI chatbots. So a book that unexpectedly explodes when someone opens it would be good grounds for a product liability claim, Brown says, but "a book whose content inspires someone to act recklessly should not." People encounter distressing ideas in magazine, on TV, and in books, but no one is accusing publishers of unfair practices that harm people's mental health.

Facebook isn't a cigarette

In the Foundation for Individual Rights and Expression newsletter, Zoe Armbruster writes that while social media has obvious harms, it can also achieve positive things when used the right way. Social media is a kind of public square that can aid in organizing social protests, build communities, and participate in civic life, Ambruster writes: "When we understand it in those terms it's clear why we cannot tolerate government intervention that censors speech and decimates anonymity. Protecting teens is essential. Preserving free expression is essential, too." The vast majority of even harmful speech on social media is still protected speech that minors have a First Amendment right to access, Ambruster argues. If the Meta rulings succeed in changing the law, "we may address one problem while creating another: a more monitored, less anonymous, and ultimately less free digital environment for everyone."

Ari Cohn, a First Amendment lawyer, wrote that the verdicts are disturbing because "an Instagram post isn’t a cigarette [and] a YouTube Short isn’t a shot of whiskey." Social media platforms and the information they contain and connect people to are not tangible things that have physical impacts in the same way tobacco or alcohold do, he says. "No matter how you feel about social media, the minute we start treating speech as if it were just another physical product is the minute we hand the government the power to decide what we can read, watch, and say. That’s dangerous — and the First Amendment forbids it." And the ways that platforms display that content are editorial choices that are also protected by the First Amendment, Cohn writes. "Imposing liability because speech is too appealing would be a breathtaking incursion on free speech."

The second aspect of these cases that concerns me may not be as important as free speech, but I still find it frustrating, and that is the focus on social platforms as being addicting — as addictive as nicotine or heroin, according to some of the lawyers who argue these kinds of cases. As Kevin Williamson at The Dispatch put it, social-media companies design products like Facebook and Instagram to be addictive, according to the plaintiffs, but Instagram clearly isn't addictive in the same way that cocaine or nicotine are. “Designed to be addictive is another way of saying that tech companies figure out what their customers respond positively to and then … do more of that." The use of terms like "dopamine rush" make it seem as though the addictiveness of social media is a scientific fact, but it is anything but. In fact, there's no evidence that social media either produces dopamine or that doing so causes any real physical addiction.

As Christopher Ferguson notes in a piece at RealClear Investigations, despite references in the media to how "dopamine surges" explain why you can't stop scrolling" or that Silicon Valley does its best to exploit this brain chemical to get us hooked on its products, this is pseudoscience designed to "legitimize a moral panic about behaviors that trouble certain segments of society." Research shows that dopamine spikes caused by drugs and alcohol can help induce physical dependency, but there is no evidence that a similar thing happens with scrolling on Facebook or Instagram. “Addiction is an important clinical term with a troubled and weighty history,” writes neuroscientist Dean Burnett. “People enduring genuine addiction struggle to be taken seriously or viewed sympathetically at the best of times, so to apply their very serious condition to much more benign actions like scrolling TikTok makes this worse.”

In other words, social media is not really like tobacco or nicotine, and so legal remedies designed for them shouldn't be used to justify constraints on free speech. As Ari Cohn put it, parents may be worried about their children and their use of social media, but "outsourcing parental responsibility to lawyers, tech companies, and the government is the last thing Americans should do. Parents, not platforms, are in the best position to know what speech their children are capable of handling. And they are the ones who should be making decisions."

Got any thoughts or comments? Feel free to either leave them here, or post them on Substack or on my website, or you can also reach me on Twitter, Threads, BlueSky or Mastodon. And thanks for being a reader.