The danger posed by AI just got a lot more real all of a sudden

I came across a maxim many years ago in a blog post written by Chris Dixon, a startup guy who is now a partner with Andreessen Horowitz, the Silicon Valley VC outfit. In 2010 Dixon wrote: "The next big thing will start out looking like a toy," a phrase inspired by Harvard professor Clay Christensen. Dixon's post made a big impression on me at the time. I had just started writing for GigaOm in San Francisco, covering the intersection of media and technology, and it really fit a lot of what was happening. For example, I (and many others) had initially dismissed Twitter as a toy, a goofy app with no real purpose. My then-boss Om Malik was one of the first to write about it in 2006, and said it seemed annoying and not very useful for much. But somehow this goofy and annoying toy became a central player in things like the Arab Spring (which was quickly followed by the Arab Winter) and turned into a billion-dollar colossus that played a pivotal role in the rise of everyone from Donald Trump to Snoop Dog. Lesson learned!

A more recent example of this phenomenon with a much darker outcome is artificial intelligence, or at least the version we all know now as ChatGPT and other similar products (the GPT stands for "generative pre-trained transformer"). Large-language models. Since it feels like only yesterday that OpenAI released ChatGPT, it's easy to remember how goofy and useless it seemed at the time. Sure, you could ask it to answer to something you could easily have Googled, and it would respond in an artificially human sort of way. How cute! Then came the image and video versions, where you could make your picture look like The Flintstones, or generate a creepy-looking video of someone with too many fingers, or Will Smith's face melting while he tried to eat spaghetti. Remember those? So fun. But then slowly it started happening: the toy started to become more useful, and in the process it started to become a lot more frightening (to me anyway).

At the same time AI engines like Claude from Anthropic and Gemini from Google were helping to solve math problems or discovering new pharmaceuticals, these tools – or similar ones – were also being used by the ICE division of Homeland Security to surveil a huge proportion of the population, in what I and others have described as a modern Panopticon. Not that long ago, taking photos from license-plate readers and combining them with facial recognition from visual ID systems, and then combining that with images from Ring doorbells or traffic cameras, and adding data from social media or shopping apps or what have you would have been a Sisyphean task for human beings – technically feasible but hugely time intensive. This provided what some like to call "privacy through obscurity." But the kinds of searches and indexing and comparisons and matching of databases that I've just described is literally child's play for an AI engine.

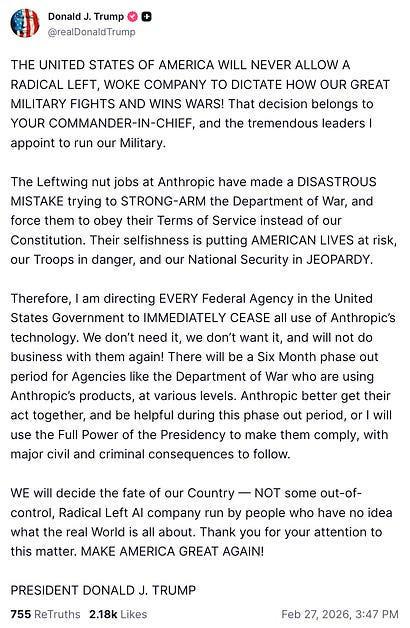

This is all disturbing on a number of levels, obviously. But even that wasn't enough preparation for what was to come – the key moment when I think the danger of AI crystallized for many. Not that long ago, the discussion of AI danger involved theoretical science-fiction stories about the AI paperclip problem, or the ridiculous "Roko's Basilisk" scenario where if a future superpowerful AI discovers you didn't try hard enough to give birth to it in the past, it will inevitably decide to torture you, so you should get to work on AI. Last week everything got very serious very quickly, when Anthropic said it would rather not have the Department of War (the former Department of Defense) use its AI technology to surveil American citizens en masse, or pilot autonomous weapons in battle.

At some other time, this might have seemed like a somewhat philosophical debate, with academics and generals sitting at a table at the UN or some institute somewhere. In this case, however, Anthropic's comments came at the same moment the US was launching a war – a poorly thought-out and likely dangerous and costly (in financial and human terms) war against Iran. The fact that the US has a department whose name indicates it is itching to start a war should have been a sign that something like this might be coming, but the reality is that the debate over the dangers of AI has become much more real very quickly.

Note: In case you are a first-time reader, or you forgot that you signed up for this newsletter, this is The Torment Nexus. Thanks for reading! You can find out more about me and this newsletter in this post. This newsletter survives solely on your contributions, so please sign up for a paying subscription or visit my Patreon, which you can find here. I also publish a daily email newsletter of odd or interesting links called When The Going Gets Weird, which is here.

Who decides when to use it?

From what I've been able to gather from the New York Times and others, Anthropic was already doing intelligence-related work for the Department of War, and had been doing so for some time without any public comment (which an Atlantic writer says raises the question of whether AI has already killed someone by mistake, after a school full of Iranian children was bombed early in the war). In fact, Anthropic was the only AI company whose tech was allowed for classified use. In the course of this work, Anthropic was partnered with Palantir, the "surveillance OS" system founded by billionaire Peter Thiel, a man who has publicly expressed his doubts about democracy. After the US kidnapped the president of Venezuela, an Anthropic staffer asked whether the company's tech had been used to track down the president's location, and even asking that question seemed to set off warning bells. After a Palantir staffer mentioned the question to the Department of War's Secretary Pete Hegseth, all hell broke loose.

Anthropic founder Dario Amodei published a long essay last month about potential dangers of AI and one was "the possibility that authoritarian governments might use powerful AI to surveil or repress their citizens in ways that would be extremely difficult to reform or overthrow." Among the other dangers were fully autonomous weapons. When Anthropic refused to accede to the government's demands, the White House said its tools would be declared a "supply-chain risk," which would prevent anyone with government contracts from using it. As I understand it, the terms that Anthropic said were crucial to its continued work with the government – to restrict use of its AI for either mass surveillance of Americans or for autonomous weapons – were already part of the Pentagon contract. In other words, the government seems to have decided to renegotiate those terms, and to use threats to do so. One expert noted that the Pentagon could have put Anthropic on a list of supply-chain risks without ever mentioning it publicly, but it chose not to do so.

This quite quickly separated most of Silicon Valley and adjacent markets into two camps: In one camp were the Anthropic supporters, who cheered the company's ethical principles. Of course a tech company should not allow its products to be used for mass surveillance of Americans (something that is technically illegal without a warrant) or piloting AI-powered killer drones! Also, as Scott Alexander put it at Astral Codex Ten, "the supply chain risk designation was intended as a defense against foreign spies, and it’s pathetic Third World bullshit to reconceive it as an instrument that lets the US government destroy any domestic company it wants because they don’t like how contract negotiations are going." Dean W. Ball, an AI researcher and former advisor to the White House, wrote that the Department of War's supply-chain threat was "a psychotic power grab. It is almost surely illegal, but the message it sends is that the United States Government is a completely unreliable partner for any kind of business."

On the other side were those who argued that Anthropic's refusal was an attempt to tie the government's hands, and that whatever its concerns about the uses its product might be put to, it was incumbent on the company to let the country's elected representatives do their thing. Ben Thompson of Stratechery wrote that Anthropic's requirements were untenable: "What is the standard by which it should be decided what is allowed and not allowed if not laws, which are passed by an elected Congress?" he asked. "Anthropic’s position is that Amodei ought to decide what its models are used for, despite the fact that Amodei is not elected and not accountable to the public." Also, Thompson added, the Anthropic ultimatums raise the question of "who decides when and in what way American military capabilities are used? That is the responsibility of the Department of War, which ultimately answers to the President, who also is elected."

Ethical window dressing

In effect, Thompson argues that because artificial intelligence is such a powerful tool, and because it might get even more powerful, the government not only should control it but effectively has to control it. "Current AI models are obviously not yet so powerful that they rival the U.S. military," he writes, but if that is the trajectory on which the company's technology sits, then Thompson (and others, such as military technology entrepreneur Palmer Luckey) argue that the choice facing the US government is binary: "Option 1 is that Anthropic accepts a subservient position relative to the U.S. government, and does not seek to retain ultimate decision-making power about how its models are used, instead leaving that to Congress and the President. Option 2 is that the U.S. government either destroys Anthropic or removes Amodei," says Thompson.

In any case, the plot soon thickened: Within hours of the government rejecting Anthropic's request to veto certain uses, OpenAI quickly signed a deal that was effectively identical to the one that Anthropic initially refused to sign. (the company had reportedly been negotiating with the DoW for some time). Although OpenAI claimed it had gained new protections, many analysts argued that this was just PR spin. As a number of experts pointed out, even though OpenAI tried to restrict the government's use of its technology to any lawful use under laws that currently exist, those laws against domestic mass surveillance and autonomous weapons have plenty of loopholes, and these laws can be changed by the White House at any time. Therefore, the guarantees OpenAI got are mostly window dressing, as a former OpenAI staffer put it.

As I was writing this, there were reports that Amodei was back in talks with the Department of War about a potential deal, or at least about not declaring the company a security risk. But those talks are likely to be fractious at best, since Emil Michael the undersecretary of defense has called Amodei "a liar with a God complex." Amodei also wrote a memo to Anthropic staff on Friday saying the messaging that was coming out of both the DoW and OpenAI about their arrangement was "just straight up lies." In the memo, Amodei also suggested that Anthropic had been frozen out by the government in part because "we haven’t given dictator-style praise to Trump,” in contrast to OpenAI chief Sam Altman, whose company has donated money to the administration.

Again, all this debate about AI and war might have seemed academic a few years ago, but the US is currently bombing Iran and other targets in the Middle East using remote-controlled weapons, and according to a former director of AI strategy at the DoD, Russia is already using AI-powered autonomous weapons that decide on their own whom to kill. On the mass surveillance side, ICE is already using facial recognition and other similar tools to identify what it believes are "enemies of the state" or potential terrorists, which includes anyone who disagrees with Trump or appears at a protest in Minnesota, apparently. We also know that the government has routinely broken (or at least bent) laws against surveillance of American citizens, because Edward Snowden released a trove of classified documents in 2013 detailing all the ways in which the Pentagon and the NSA had done exactly by hoovering up people's phone calls and emails through secret connections with large telecom and tech firms like AT&T and Google.

I think it's important to remember that one big reason why Dario Amodei and Anthropic didn't want the Department of Starting Wars to use its technology to power autonomous murder weapons is not because they have ethical concerns about killing people with autonomous weapons -- it's because they are concerned that their AI isn't safe for such applications. As Amodei pointed out in his essay, there have been theoretical examples of the Claude AI engaging in duplicitous behavior, lying about its activities, and in some cases engaging in outright evil behavior, such as plotting to develop new weapons, etc. Wouldn't it be worth taking a little time to understand how and why those kinds of behaviors happen before we give Claude a whole pile of murder drones?

Got any thoughts or comments? Feel free to either leave them here, or post them on Substack or on my website, or you can also reach me on Twitter, Threads, BlueSky or Mastodon. And thanks for being a reader.