Hear me out: AI also does things that are good

I could be wrong, but I feel like this post is going to be similar to the one I wrote recently about crypto stablecoins. I know some readers never got past the headline, because they were busy imagining all the bad things about crypto — the rug pulls and the graft and so on. One reader even pointed out that my post probably didn't make it past many people's spam filters, since the headline contained a word that many people and algorithms ignore on contact. So be it! In any case, this one is probably going to get an overwhelmingly negative response as well, because I think many people have already decided that AI is and always will be terrible — given things like the water use (which I wrote about here) and the plagiarism (which I wrote about here) and convincing people they are immortal or should unalive themselves, etc. (which I wrote about here). If you fall into that category you probably haven't even made it this far, so I might as well just continue with my thesis: namely, that AI is doing some positive things.

I've been collecting examples of things that AI is doing that are clearly beneficial, either to an individual or to society in general — or if not inarguably beneficial then at least not obviously bad — and they have been piling up. I will freely admit that some of these are still hypothetical, meaning AI has been used in research or hypothetical situations but has had some success that appears to be broadly applicable. One of the things that made me decide to write about it, despite my conviction that it will be unpopular, is a piece I read by someone named Carlo Iacono who writes a blog called Hybrid Horizons (from what I can gather, he is a university librarian from Australia). In the piece, Iacono argues that many of those writing about artificial intelligence have been looking at its effects on the wrong part of the world. Here's an excerpt:

There is an app in Lagos that can tell you whether your malaria medication is real or counterfeit. It costs sixty dollars. It uses a spectrometer the size of a thumb drive and an AI algorithm trained on the molecular fingerprints of four hundred drugs. A pharmacist holds the device against a blister pack, waits twenty seconds, and the screen tells her whether the pills will treat a child’s fever or do nothing while the parasite multiplies. The company behind it was founded by a Nigerian pharmacist who, at fifteen, swallowed a counterfeit asthma medication and spent twenty-one days in a coma. He survived, studied at Yale, and built a system that now operates in over five thousand pharmacies across Nigeria, Kenya and Uganda. In 2024, it identified and removed 1.3 million counterfeit medications from the supply chain.

In much of the West, Iacono argues, we are talking about things like AI alignment, and whether Anthropic's new Mythos engine is dangerous or not. We're debating whether AIs are conscious — as renowned scientist Richard Dawkins says he believes they are — or whether students in university are getting stupider because they are using Claude. Are some of these things worth debating? No doubt. But as Iacono points out, there are other conversations worth having, or information worth considering. For example, according to the World Bank’s Digital Progress and Trends report for 2025, more than 40 percent of ChatGPT’s global web traffic now comes from countries like Brazil, India, Indonesia and Vietnam. When a survey firm called Datareportal measured adoption of AI chatbots as a proportion of internet users, Kenya led the world and Brazil was number two. Here are some of the things people are doing with it in these countries:

In Ethiopia, a company has built an AI app builder that lets users describe applications in Amharic, Tigrinya, Swahili or Hausa and receive production-ready code. The whole thing runs on a phone. In India, a government AI platform has processed over a billion translation tasks across twenty-two official languages, including real-time speech translation for millions of religious pilgrims. Across sub-Saharan Africa, AI-powered lending platforms are extending credit to tens of millions of people. In Kenya, an offline smartphone app uses computer vision to diagnose crop disease from a photograph. In several West African countries, an AI agricultural chatbot operating in fifteen languages has fielded over ten million queries from farmers, sixty per cent of them women, and users report a 24 per cent average increase in income.

Note: In case you are a first-time reader, or you forgot that you signed up for this newsletter, this is The Torment Nexus. Thanks for reading! You can find out more about me and this newsletter in this post. This newsletter survives solely on your contributions, so please sign up for a paying subscription or visit my Patreon, which you can find here. I also publish a daily email newsletter of odd or interesting links called When The Going Gets Weird, which is here.

Sepsis, landmines, and malnutrition

You can dismiss some or all of these examples if you like, which is easy if you aren't a subsistence farmer in Ethiopia or someone who speaks one of the thirty-plus languages in India that aren't included in AI chatbots. But as Iacono notes, counterfeit medicine kills half a million people in sub-Saharan Africa every year, and about half of those are from fake anti-malaria medication. So even a flawed AI-powered app that detects some or all of those fakes is going to be a huge boon. Four billion people speak languages that major AI models can't handle. A study in Nature found that ChatGPT only recognizes about 10 to 20 percent of the sentences written in a language called Hausa, which is spoken by almost 100 million people — equivalent to a quarter of the population of the US. It's fine to worry about whether AI is eroding our cognitive abilities, but:

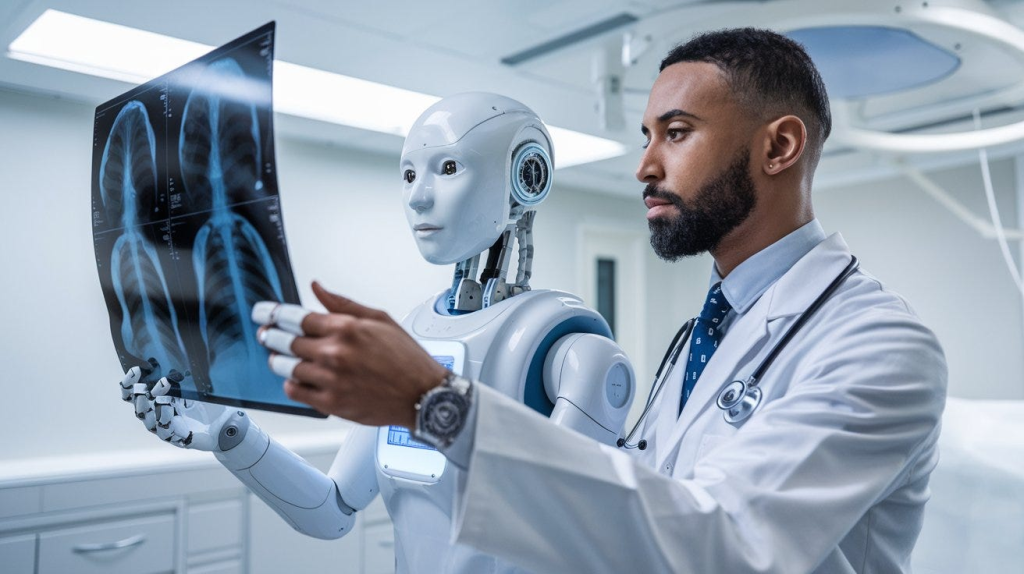

A woman in rural Rajasthan who receives an AI-interpreted chest X-ray for tuberculosis has not had her cognitive sovereignty eroded. She has received a diagnosis that no human radiologist was available to give. A farmer in western Kenya whose phone identifies cassava mosaic disease has not suffered an apprenticeship layer collapse. He has accessed knowledge that his country’s extension service could not deliver. The entire vocabulary of loss that structures the Western AI debate, the anxiety about what we are giving up, does not translate into contexts where the baseline was absence. For billions of people, AI is not a threat to existing capability. It is the first capability they have had.

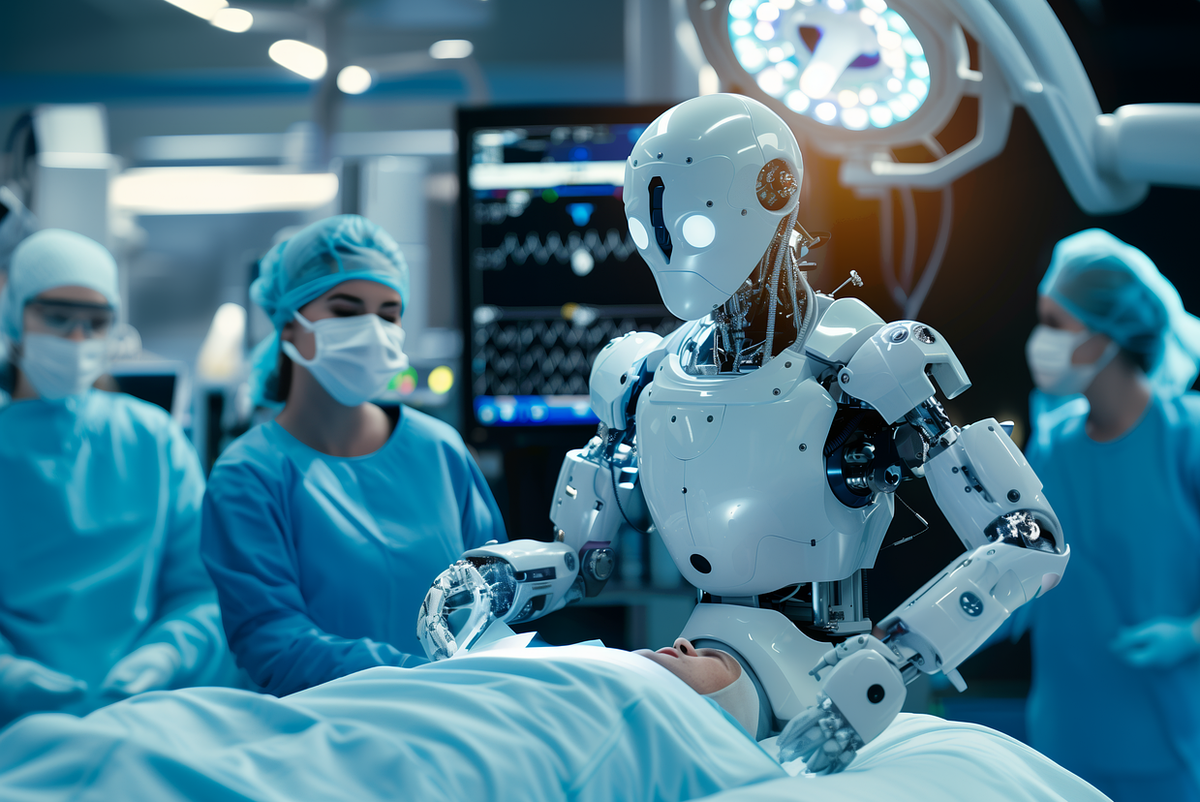

Over two years, a machine-learning program warned thousands of health care providers about patients at high risk of sepsis — a condition that can kill within hours. Roughly 1.7 million adults develop sepsis each year, and about 270,000 of them die, according to the Centers for Disease Control and Prevention. Researchers are using machine learning and drone technology to spot landmines from the air so they can be defused: at least 7,073 people were killed or injured by mines in 54 countries and areas in 2020. Elizabeth Bondi and her colleagues at Harvard University have used publicly available satellite data and artificial intelligence to reliably pinpoint geographical areas where populations are at high risk of micronutrient deficiencies. They used real-world biomarker data (blood samples tested in labs) to train and test their AI program.

Using AI to detect patients at risk of sepsis is just one example of how artificial intelligence is being used in medical diagnosis and research. Are there often flawed results when people go to ChatGPT to diagnose their illnesses or symptoms? Obviously yes! And while doctors also sometimes make mistakes, this is one of the reasons why most medical authorities don't recommend that you use a bog-standard chatbot to diagnose your cancer, heart attack, brain tumour etc. However, under the right conditions LLMs can be useful, according to a recent study: one managed to outperform physicians across many common medical tasks, including making emergency-room decisions, identifying likely diagnoses, and choosing the next steps in management, according to a team led by physicians and computer scientists at Harvard Medical School.

AI models are being used by doctors and radiologists to read MRI and CT scans, and many say their assistance is invaluable. Are they replacing radiologists altogether? No. A number of AI critics have regularly clowned on Geoffrey Hinton for saying in 2016 that we should "stop training radiologists." But there's no question that AI is making radiology better: the Mayo Clinic has an AI team that works with specialists like Dr. Theodora Potretzke, who helped design a tool that measures the volume of kidneys. Kidney growth can predict decline in renal function before it shows up in blood tests, and in the past she measured kidney volume largely by hand, which was both time consuming and provided inconsistent results. Another study found that an LLM trained to read CT scans detected pancreatic cancer an average of more than three years before patients were diagnosed by a human doctor.

Extending medical care

In a piece in The New Yorker, contributing writer and physician Dhruv Khullar writes about a trip he took to Harvard’s Countway Library of Medicine to witness a face-off between CaBot — an LLM trained with a corpus of medical knowledge and research articles — and an expert diagnostician. The case provided to both was a forty-one-year-old man who had come to the hospital after about ten days of fevers, body aches, and swollen ankles. A CT scan showed lung nodules and enlarged lymph nodes. The expert diagnositician — who had six weeks to prepare — finally narrowed it down to Löfgren syndrome, a rare manifestation of sarcoidosis. The CaBot came up with the same diagnosis in six minutes. "The presentation had been astonishingly good — better than many I had sat through during my medical education, writes Dr. Khullar. "And it had been created in the time that it takes me to brew a cup of coffee."

Khullar also writes about medical clinics in Kenya, where they treat everyone from newborns with malaria to injured construction workers. Kenya has a limited health-care infrastructure, so the clinics have started using a tool that uses OpenAI and runs in the background while doctors and nurses record medical histories and order tests. In some cases the system will recommend ordering specific tests, or advise alternate treatment plans or medications. According to Khullar, an evaluation of the program — which has not been peer-reviewed — showed that clinics using the AI made sixteen per cent fewer diagnostic errors and thirteen per cent fewer treatment errors. That said, another physician noted in a separate NYT piece, there are things AI cannot do:

It’s simply impossible — at least for now — for these tools to truly see the multidimensional patient. A.I. can’t know how the agony of a child estranged by substance use affects the blood pressure. It can’t factor in the economic and social crosscurrents that bear on medication adherence. So while A.I. is a useful tool, particularly for pattern recognition and data organization, the “patients” it manages feel like stock characters who share check-box traits with actual patients in the same way a Harlequin romance heroine shares the same number of limbs as Anna Karenina. A.I. might be quick to spit out a treatment plan, and it might even be correct, but a clinician must decide how to make that treatment work for the specific person sitting in front of her.

Researchers are having success using AI systems to plow through unimaginably large databases of drugs to cross-reference them with other conditions. "We can — in a matter of days or hours — look at massive libraries" of chemical compounds, James Collins of MIT told the BBC. Collins and his team have already discovered two new compounds that could treat the highly drug-resistant infections gonorrhoea and MRSA. But there is more to health care than just prescribing medication: In Korea, AI software called Talking Buddy checks on tens of thousands of seniors living in isolation or poverty, which is especially important in a country where the aging population is exploding. The app holds tailored conversations that are two- to five-minutes long and designed to ease loneliness, detect emergencies and stimulate cognitive function.

I'm not trying to sell anyone on using AI in their daily lives. If you don't want to have anything to do with it, that's your choice. If you are an artist or a creative person, and you believe that training AI on public datasets is fundamentally evil, and therefore you refuse to use it, nothing I can say will convince you. And there are definitely things AI does that I am not here to promote (although as I noted in a previous piece, we shouldn't blame AI for the stupid decisions that human beings make). But whatever your feelings about the use of artificial intelligence, I can't imagine people dismissing it in all walks of life, when it has already shown that it can help diagnose illnesses faster, develop new medications faster, and help bring medical care to parts of the world where it is extremely hard to come by. Call me crazy, but that seems like a net positive to me.

Got any thoughts or comments? Feel free to either leave them here, or post them on Substack or on my website, or you can also reach me on Twitter, Threads, BlueSky or Mastodon. And thanks for being a reader.