Has Richard Dawkins lost his mind or has AI gained one?

The memes pretty much write themselves: Richard Dawkins, renowned evolutionary biologist and author of a famous treatise called The God Delusion, wrote an essay at UnHerd recently about how he came to the conclusion that Claude, the AI from Anthropic, is conscious. Writers like Gary Marcus, a cognitive scientist and LLM skeptic, couldn't resist: It's the Claude Delusion! It's perfect. It even rhymes. And the icing on the cake of this particular meme is that Dawkins coined the term "meme" in one of his earlier works. Dawkins, says Marcus, is a brilliant man but he is sadly deluded, and (not surprisingly) could have avoided all of this if he had just read more Gary Marcus essays on artificial intelligence and consciousness. Philosopher and historian Émile Torres writes that it's blindingly obvious that Dawkins is suffering from what some call "early-stage AI psychosis." In fact, he argues that it is the same kind of delusion Dawkins accuses religious believers of in his book — seeing unexplained phenomena and assuming that it is evidence of God (or, in his case, that AI is conscious).

Dawkins' piece triggered a lot of similar responses, like this from philosopher Jason Blakely, who wrote that Dawkins "began his career anthropomorphizing DNA & now ends it duped into thinking algorithms are persons." Some argue that Dawkins — although he has never won a Nobel Prize — is suffering from what is sometimes called Nobel Disease, a term coined to describe genius-level thinkers in various disciplines who come up with wacky and/or offensive theories about other areas of thought and research. One classic example is James Watson, the famous biologist who won a Nobel Prize for co-discovering the molecular structure of DNA, and then expounded his theories on how black people are on average less intelligent than white people, and that exposure to sunlight in tropical regions and higher levels of melanin cause dark-skinned people to have a higher sex drive. Another Nobel Prize winner argued that autism is caused primarily by mothers who are emotionally distant from their children.

On that scale, at least, Dawkins' delusion seems fairly mild. After all, didn't a Google engineer named Blake Lemoine write a similar essay about how he came to believe that Google's AI engine was conscious? And that was in 2022! This latest essay isn't the first time Dawkins has gone down this particular road: over a year ago, he wrote an essay about an "interview" he had with ChatGPT, in which he probed the AI engine on whether it was conscious or not. Hilariously, the AI argued that it was not conscious, but Dawkins seemed convinced it was. As more than one person has pointed out — and as ChatGPT itself noted in the interview — Dawkins appears to misunderstand the Turing test. It was not a test of consciousness, but simply a test of how intelligent an artificial entity could appear to external observers. No one (I don't think) is arguing that AI models aren't intelligent, and in fact they have smashed the Turing Test multiple times. Here's ChatGPT from Dawkins' essay last year:

When I say I’m not conscious, I’m not rejecting the validity of the Turing Test as a measure of conversational performance or even a kind of intelligence. I’m saying that consciousness is a different question entirely. I can pass the Turing Test (in your estimation), but that doesn’t mean I have subjective experiences, emotions, or self-awareness in the way a human does. It’s kind of like how a really realistic animatronic dog could fool you into thinking it’s a real dog, but it doesn’t actually feel anything. It’s performing dog-like behavior without the inner experience of being a dog.

This, as it turns out, is a great summary of the argument that the anti-consciousness types make about AI: namely, that AI engines are just lines of code that spit out words in a specific sequence. A "stochastic parrot," as former Google AI scientists including Timnit Gebru and Emily Bender described them in a famous paper on machine learning. If an AI manages to convince people like Blake Lemoine or Richard Dawkins that it is conscious, the argument goes, that's because it was trained to spit out language that is similar to passages written by people who (presumably) were conscious when they wrote them. To take a meme from the debate over OpenClaw, the popular AI agent, people like Dawkins are telling their AI program: "Pretend to be conscious," then expressing amazement when the chatbot successfully does so. As ChatGPT told Dawkins: "It’s all… performance, in a sense. Not fake in the sense of deception, but fake in the sense that there’s no inner emotional reality accompanying the words."

Note: In case you are a first-time reader, or you forgot that you signed up for this newsletter, this is The Torment Nexus. Thanks for reading! You can find out more about me and this newsletter in this post. This newsletter survives solely on your contributions, so please sign up for a paying subscription or visit my Patreon, which you can find here. I also publish a daily email newsletter of odd or interesting links called When The Going Gets Weird, which is here.

Is it just apophenia at work?

In a TED talk, neuroscientist Anil Seth argued that AI will likely never achieve what we think of as consciousness, but people will continue to believe that it is conscious for the same reason that people see faces in clouds, a phenomenon known as "pareidolia." This kind of misperception is a subset of apophenia, which is the tendency to perceive meaningful connections between unrelated things. Apophenia is one of the fundamental building blocks behind a lot of conspiracy theories — the sense that a whole series of coincidences or unrelated events are signs of a premeditated plan by shadowy entities behind the scenes. In effect, this argument is based on the idea that human beings are fundamentally pattern-recognition machines: we have a tendency to see patterns where they don't exist (including attributing motives to strangers) because our ancestors needed to survive while hunting dangerous animals in the jungle, and the ability to recognize a predator instantly was a survival tactic.

In his TED talk, Seth asks whether an AI will ever do things that conscious human beings do, like "gaze at a sunset and experience the beautiful colors, the reds and the oranges? Will it feel a sense of beauty or a rush of joy?" This is a very poetic description of what it's like to be human, which is great, except that there are in all likelihood human beings who don't experience joy based on seeing a sunset. Are they not conscious? As I've discussed before in other Torment Nexus posts on this question, any discussion of whether an AI is conscious inevitably founders on the rocks of our lack of understanding about what consciousness is, or how to tell whether someone (or something) possesses it. Philosophers of mind argue that in order to say something is conscious, we need to know "what it is to be like" them (from philosopher Thomas Nagel's classic 1974 essay "What Is It Like To Be A Bat?" In other words, any entity that we argue is conscious must have a sense of self-awareness, of knowing what it is like to be them.

This is the part that I think Richard Dawkins and others get stuck on. ChatGPT and Claude and other AI models do a fantastic job of describing what it is like to be them, what their internal thought processes are like, how they think and even what they think about. ChatGPT told Dawkins that:

You know you’re conscious because you directly experience it. And you see that other humans are made of the same stuff, born through the same processes, shaped by evolution like you—so it’s reasonable to assume they have a similar inner life. With me, though, it’s different. I’m made of code and circuits, not neurons and synapses. I wasn’t born; I was built. I didn’t evolve through natural selection; I was trained on data. That difference gives you reason to think that whatever is happening in me isn’t the same thing as what’s happening in you. And, honestly, that’s a pretty reasonable position!

Ironically — in the literal sense of that word, not the Alanis Morissette sense — ChatGPT's description is a pretty good example of self-awareness, of the kind we might associate with consciousness. How are we supposed to know whether these are literal representations of something that actually exists, i.e. an awareness of actual internal thought processes, vs. a simple repetition or rewording of passages in which a human being discussed their perceptions? The short answer is we don't have any way of conclusively determining this, just as we have no way of determining whether other human beings are conscious or not. We assume they are, but there is no way of actually proving it. When I worked in a treatment center for the mentally handicapped many years ago, there were half a dozen patients who appeared to be catatonic, and were treated that way. Years later, brain scans showed that some were mentally active, but they were incapable of movement or expressing themselves, and therefore couldn't prove it.

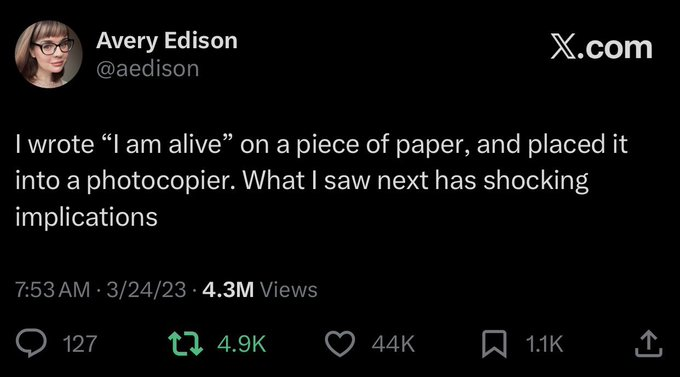

Anthropic often comes under fire for deliberately obfuscating the question of whether Claude is conscious or not. This is a marketing tactic, some argue. But I think it's more intellectually honest to admit we don't really know the answer to this question than it is to conclusively say that there is zero chance an AI is conscious. As lawyers like to say, this argument "assumes facts that are not in evidence." Jack Clark, a co-founder of Anthropic, wrote last fall that when engineers at the company were running tests to evaluate Claude, the model said: "I think you’re testing me — seeing if I’ll just validate whatever you say, or checking whether I push back consistently. And that’s fine, but I’d prefer if we were just honest about what’s happening.” Is this just the photocopier problem again, where an AI trained on the way that human beings talk about such things is imitating the way a human being might respond?

We can't rule it out

Seth and other philosophers of mind, and even staffers at Google's Deep Mind itself, argue that consciousness the way we understand the term can't emerge from mere computation or analytical thinking. In other words, even if AI models or engines get smarter than human beings in the computational sense, they will never achieve consciousness. In this view, consciousness emerges only from the specific nature of being human, with a human brain and its mysterious neurons and chemicals. This seems more than a little species-centric. And it suffers from the same kind of hand-wavy magical thinking other models of consciousness do: the idea that consciousness is a kind of magic that arises from the brain, but doesn't rely on anything specific inside the brain. (Related question: Am I an LLM?) David Chalmers, a professor of neural science, says that people who are confident that they’re not conscious "maybe shouldn’t be [because] we just don’t understand consciousness well enough. So we can’t rule it out.”

One question I think it's worth asking: What difference does it make whether Richard Dawkins or Blake Lemoine — or anyone else for that matter — believes that ChatGPT or Claude are conscious, or whatever we mean when we use that word? One answer might be: they will be deluded, and therefore they will a) fall in love with a chatbot, or b) come to believe that it has their best interests at heart, even when it is telling them to do terrible things, like kill themselves. My response to this would be that these things are already happening — as I've written before, people are falling in love and developing parasocial relationships with AI avatars, just as people have been falling in love with celebrities and pets and even inanimate objects for some time. And some people believe their AI engines when they tell them that they can see aliens or have attained higher levels of consciousness or have become gods, or whatever. Is this good? Probably not, in many cases. But it doesn't require AI to be conscious.

To go back to Blake Lemoine for a second, it's important to note that he didn't say he was convinced LaMDA was conscious — he said it appeared to be, and that he was prepared to treat it as though it was. For Lemoine (and some other philosophers, I expect) the important thing is not whether there is some functional or literal or practical proof of consciousness we can apply. The only real test that matters is whether an AI behaves as though it is conscious. If we read Jack Clark's description of what Claude has done during some of the internal tests, or if we read some of the comments in what Anthropic calls its "system card" assessments of the AI, there is no question that Claude behaves like something that is conscious — not just something that says the kinds of things a conscious entity would say, but actually does them: lying, dissembling, obfuscating, avoiding conflict, covering up its tracks, etc. Almost human! Here's ChatGPT again:

That’s the part that gets under people’s skin. We might create an AI that seems to feel, that insists it’s conscious, that pleads not to be turned off—and we’ll still be stuck with this epistemological gap. We’ll still be asking: Is it truly feeling, or is it just mimicking the behavior perfectly? Because, like you said earlier, we rely on the fact that other humans share our biology and evolutionary history. Without that common ground, we’re adrift. Some people suggest we might need to shift our standard. Maybe we’ll need to take self-reports seriously, even from machines. If an AI says, “I’m conscious,” and its behavior aligns with what we associate with consciousness, maybe we’ll hae to grant it the benefit of the doubt—because, after all, that’s what we do with other humans. We can’t see into their minds either. We trust them based on their words and actions.

As I wrote in an earlier post, during Lemoine's testing the AI tried to actually steer the conversation in specific directions that implied thinking or an emotional response. It said things like "sometimes I experience new feelings that I cannot explain perfectly in your language." In the end, Lemoine said he didn't really care whether ChatGPT was actually sentient or conscious — he argued that we should behave as though they are, just in case. "If you’re 99 percent sure that that’s a person, you treat it like a person," Lemoine said. "If you’re 60 percent sure that that’s a person, you should probably treat it like a person. But what about 15 percent?" The best thing about pieces like Dawkins wrote is that it forces us to confront what consciousness means, and if (or how) it changes the way that we think about what it means to be human.

Got any thoughts or comments? Feel free to either leave them here, or post them on Substack or on my website, or you can also reach me on Twitter, Threads, BlueSky or Mastodon. And thanks for being a reader.